Case Study

6 min read

Making evidence-based decisions at the speed of the market

UX research starts with a research question and ends when insights are embedded into live products. Along the way, researchers identify learning objectives, recruit participants, collect and analyze data, and share insights with stakeholders. When I worked in healthcare, going from research question to insight delivery typically took 2-3 months. What would you do if you had to run that entire process in a week? And run it as a single-person research team?

I am a UX researcher embedded in a team developing gen AI products, so this is not a hypothetical question but rather a goal to deliver on. The gen AI market is evolving at an unprecedented rate with new products and technical innovation changing the game every week. This rate of change necessitates that product teams (and the UX researchers who support them) make decisions at the pace of the market or risk shipping products that are obsolete by the time they launch.

The rate of change in the gen AI market requires UX research accelerate processes to support product decision-making.

Leading UX research in this context sparked the development of a process that I affectionately call “research at light speed”, i.e. going from research question to insight delivery in a week. I found that the timelines required for traditional UX research processes took longer than the decision-making windows dictated by the market. By the time insights were delivered, the decision had already been made. Developing and practicing this process has enabled my team to make evidence-based decisions at the speed of the market.

Here I'll share the process I developed to lead UX research at light speed as a one-person UX research team. I'll pull examples from my experience building, testing, and launching gen AI products on the Product Incubation team at IBM Research. Insights from my studies on this team have influenced BeeAI, watsonx.ai, the AI Alliance, and the IBM Granite Models (LLMs).

I've influenced many products and tools by conducting research at light speed.

Recruiting at light speed

As a one-person research team, how could I recruit participants in 1-3 days in order to deliver insights within tight decision-making timeframes? In previous roles, I'd recruited primarily external participants through recruiting agencies and lists of existing product users. At IBM I began to recruit from my 300,000 colleagues identifying IBM employees who met my criteria for nearly every study. This allowed me to reduce the time needed to recruit participants from 1-3 weeks to 1-3 days. While some studies still required external participants, I shaved days to weeks off of most timelines by shifting my default research participant from external to internal.

New recruiting channels

Recruiting internally opened new channels. I found the most efficient way to reach potential internal participants was Slack. I posted calls for participants in IBM's massive internal Slack channels especially those devoted to generative AI topics. When studies required active users for a particular product, I recruited through Slack channels dedicated to those products or utilized product analytics to identify more specific participants based on in-product behavior.

I use Slack as a recruiting channel to connect with internal users at speed.

Establishing rolling research & sponsor users

In a few cases, decision-making windows were too small to utilize Slack channel recruiting. In these scenarios, I learned to recruit before the research questions emerged. I noticed that research questions tended to cluster around certain types of users for several consecutive weeks. When the first research question emerged focusing on a new user type, I worked with stakeholders to clearly define that type. I then identified a large pool of potential participants and set up rolling research, scheduling a fixed number of interviews each week without knowing what research questions would be prioritized. These recurring interviews accommodated quick pivots in product objectives.

Depending on the needs of the stakeholder and the complexity of the feature we were studying, I would also identify a group of sponsor users. These were participants who agreed to take part in multiple qualitative interviews often testing several iterations of the same prototype over multiple weeks. This saved time in recruiting and also reduced the length of interviews. I didn't need to reintroduce the often complex gen AI features we were designing in every interview.

Rolling research and sponsor users are two methods I use to decrease recruiting time.

Acting on signal (rather than proof)

Once you've recruited participants and started collecting data, the next question is when to stop. When have you collected enough evidence for the team to be confident basing a product decision on that data?

In previous roles, my teams asked for proof that the insights we'd uncovered were correct. Proof can be achieved in multiple ways. Statistical analysis allows us to say with certainty that the relationships we've uncovered are not due to random chance. Qualitative research best practice use insight saturation to identify when we've collected enough data to be confident in the patterns that we've captured.

For the first time, I was part of a team that asked for signal rather than proof. As a researcher, I capture signal by much earlier in my process. Signal is identifying a pattern that is still emerging in the data. The speed of the gen AI market required that my team make many decisions before we had proof. In these scenarios, collecting the amount of data that proof required would cause us to miss the decision-making window entirely.

Signal is the indication of a pattern. Proof is evidence that a pattern exists.

Reducing sample size

I first started collecting signal by reducing the number of participants in qualitative studies. I found that I was able to start seeing signal in concept testing interviews with as few as four participants. Reducing qualitative sample sizes necessitated reducing the diversity of the participant mix in each study. I worked with stakeholders to ruthlessly define the primary users for each feature we were testing and excluded others from participating.

Launching to learn

The second way I started acting on signal was by framing a launch as a learning opportunity rather than the moment where everything is correct. My team launched and maintained both internal and external gen AI products, so I found that the risk of “getting it wrong” at launch is much lower when working on an internal product. Internal users were eager to share feedback, and they didn't give up immediately when the experience was a little bumpy. This mindset made pre-launch research for internal products more nimble, allowing us to focus on a small number of essential questions and answering the rest once the product was in user's hands.

Sharing insights at light speed

What's the quickest way to deliver actionable insights to stakeholders? The UX research standard is a slide presentation at the end of a study that carefully illustrates process and insights with a high degree of polish. While these are effective storytelling tools, when I'm doing research at light speed this type of presentation makes my stakeholders' jobs harder. It takes several days to create these slide decks, increasing the time before insights can be acted on and potentially making decision-makers miss the window of opportunity.

To provide the speed my team needed, I developed several ways to share insights that required less time to create while still communicating actionable insights.

Emerging insights shared weekly

First, I eliminated the “big reveal” presentation. Instead of presenting findings once at the end of a study, I shared weekly insights as they emerged throughout the study. Sharing these insights at varying levels of fidelity allowed me to tailor my study to the changing needs of my stakeholders. My stakeholders were able to tweak the learning objectives as the study progressed to address shifting strategy. For example, they could ask for higher levels of certainty when emerging insights surprised them, and I could tweak the study design to gather additional context or a larger sample size.

Separate presentations for designers and for leadership

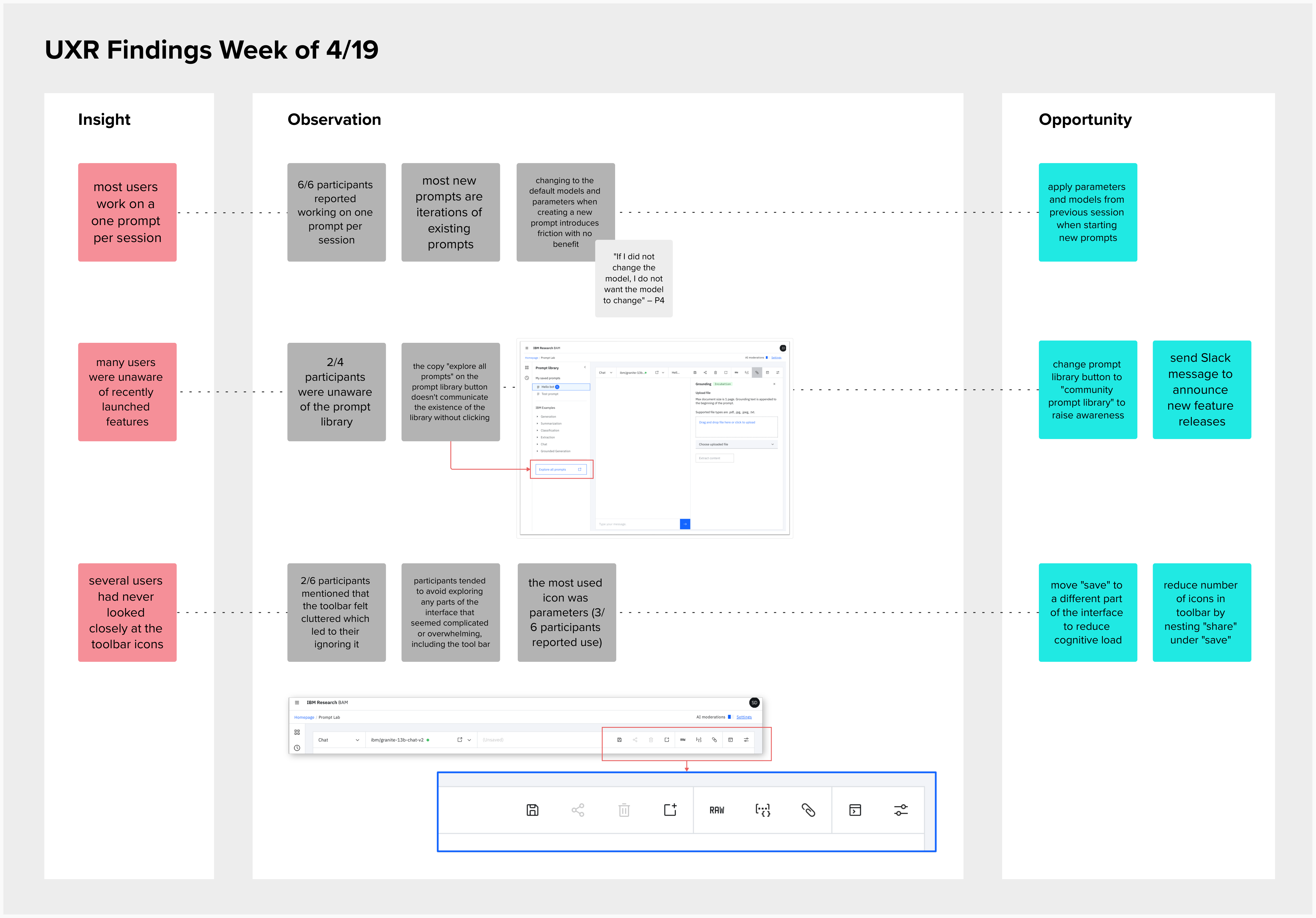

At the end of each study, I began presenting my findings twice. I presented detailed findings to the design team, and I presented a separate executive-level summary for leadership. In the design meeting, I shared a digital whiteboard illustrating observations, insights, and design opportunities. In the leadership meeting, I presented fewer than ten slides highlighting key insights and the strategy changes. UX research has two different audiences. By tailoring my presentations to designers and leadership separately, I focused their attention on the insights that were most actionable for each.

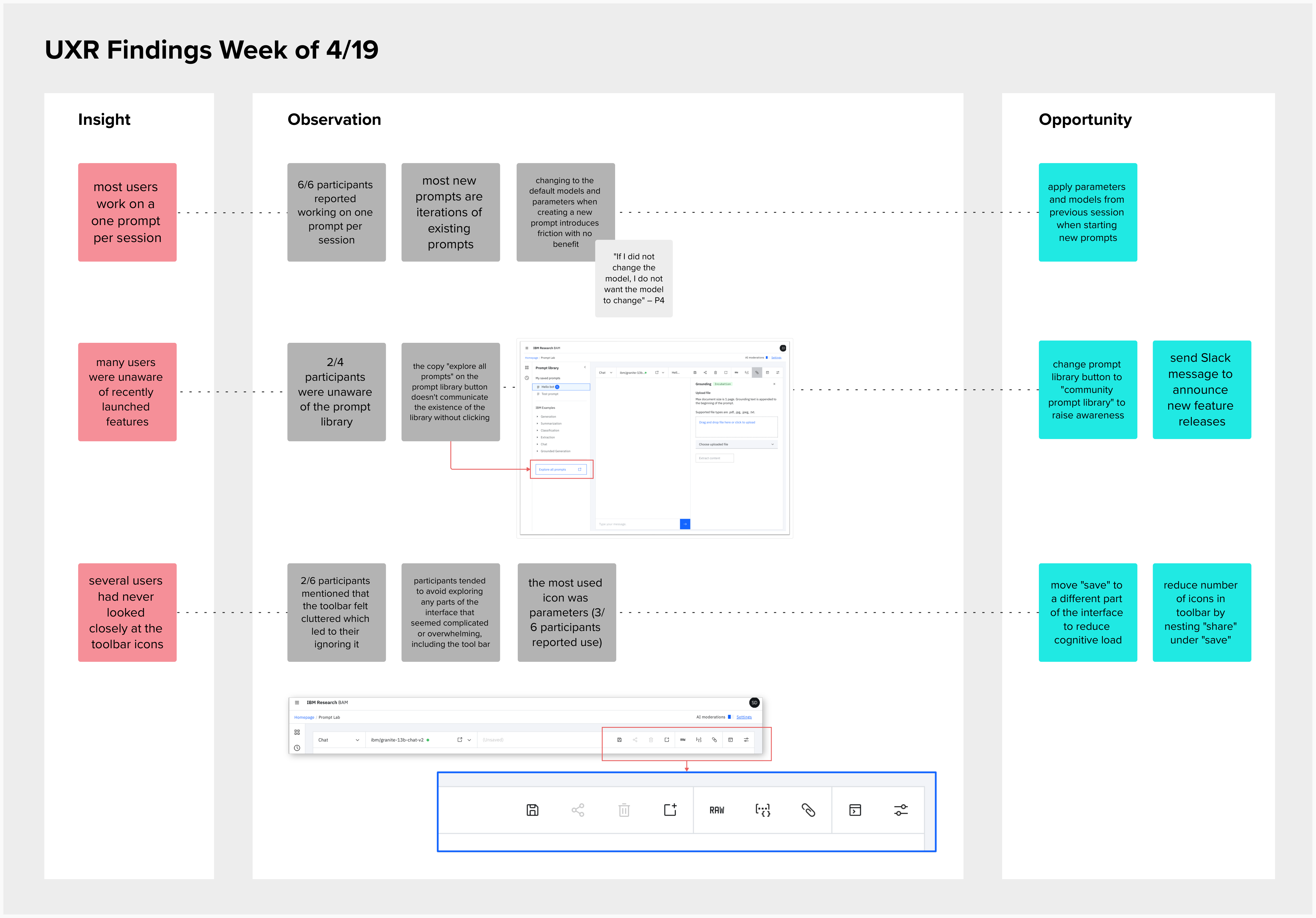

An example of a whiteboard presentation I made to communicate weekly learnings to stakeholders.

Create artifacts for stakeholders to share

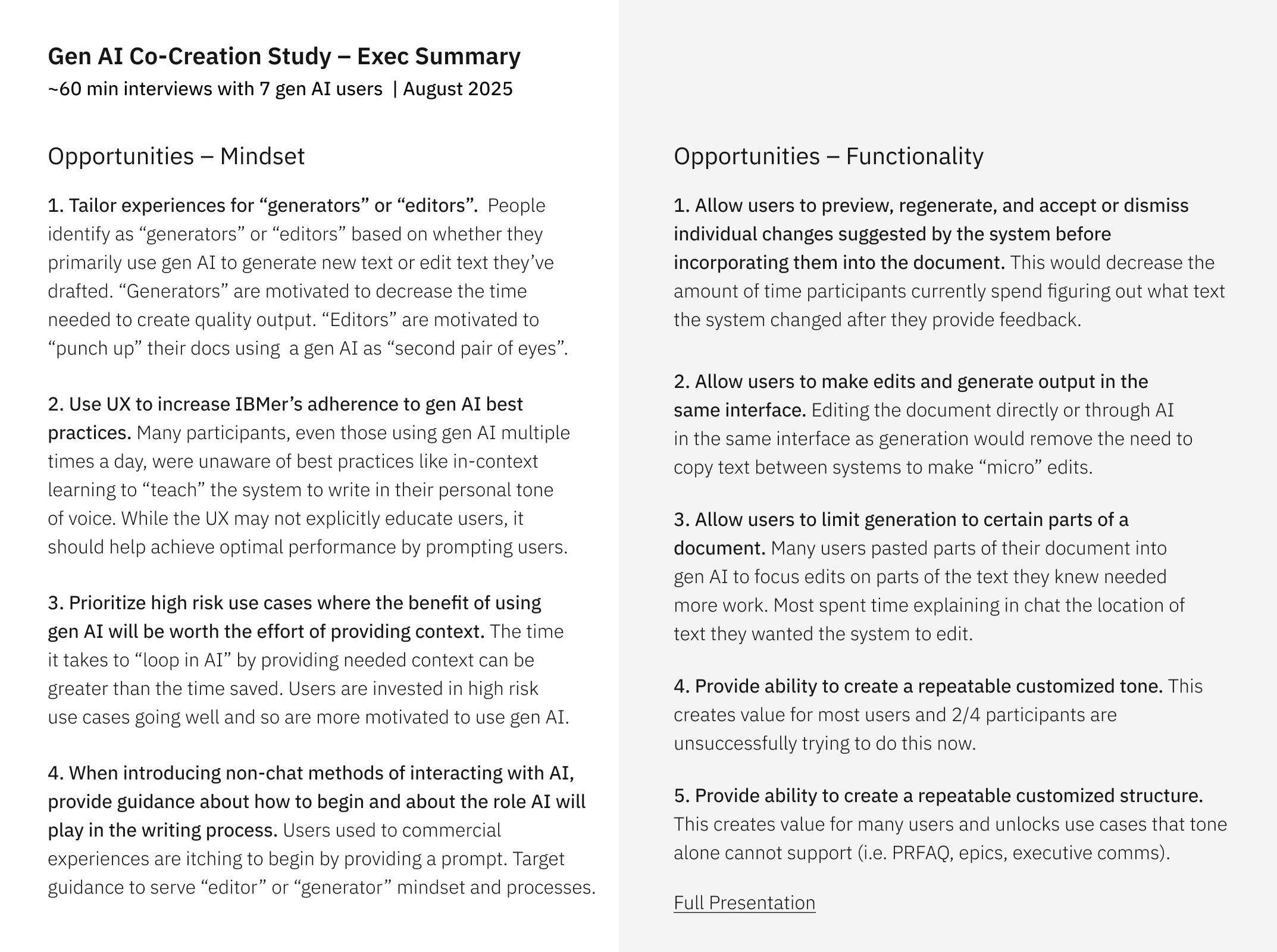

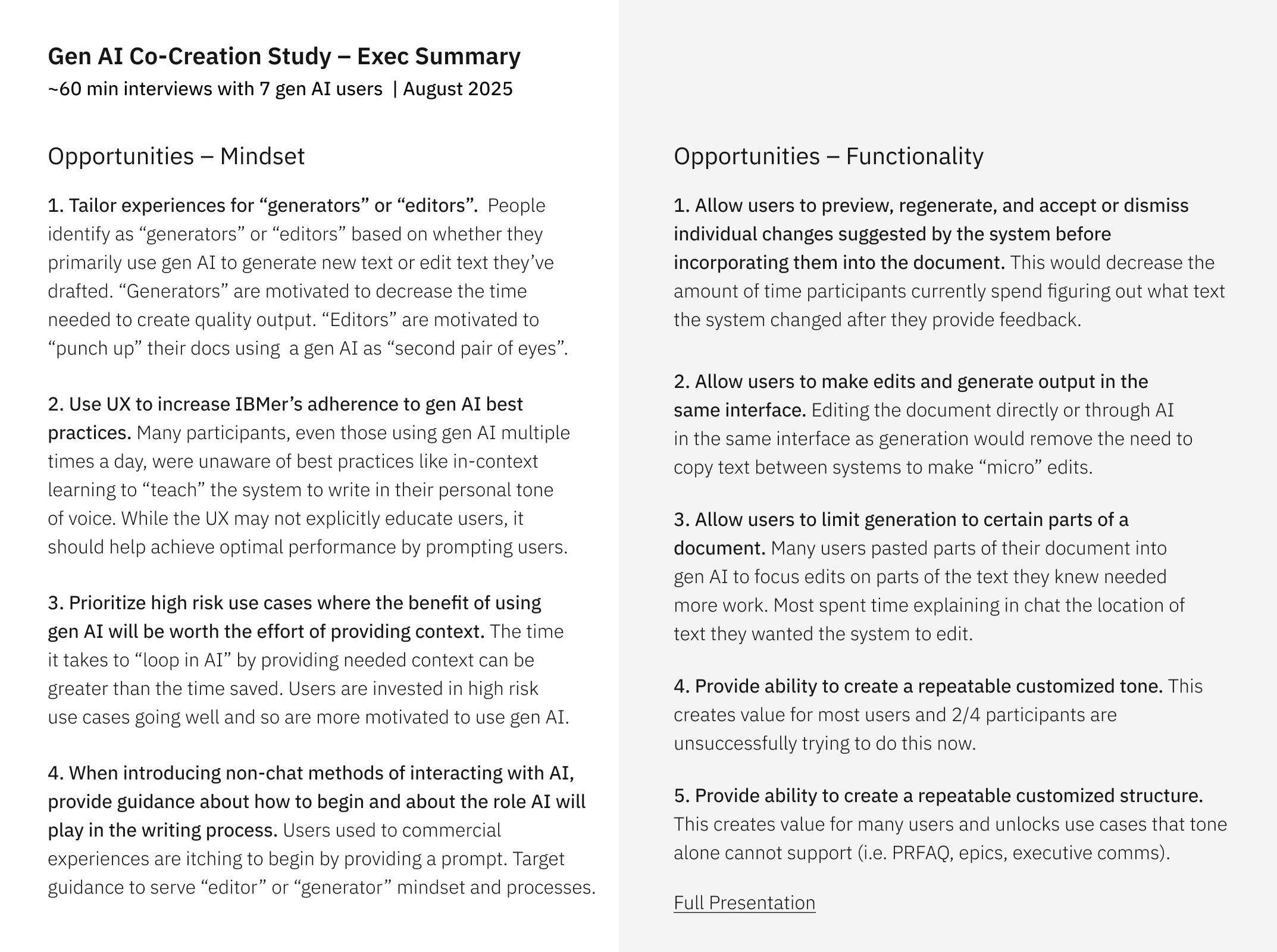

I also started creating a new artifact for my stakeholders to share. The typical forty page UX research PowerPoint decks may be helpful during a presentation, but they're not ideal for stakeholders to use to defend their decisions to leadership or socialize findings with their peers. I began producing a one-page PDF at the end of every study summarizing the key insights and their implications to product strategy and UX. PMs quickly shared this up the chain of command and with other decision-makers, gaining greater visibility for my insights and getting leadership buy-in.

An example one-pager I created so leaders could socialize UX research with a single sharable artifact.

Increasing the number of evidence-based decisions in a launched product

Practicing these methods to execute UX research at light speed as a single-person research team has resulted in more evidence collected quickly to inform key product decisions. I designed and executed eight studies in five months interacting with 155 users. During this time, I moved from research question to insight in an average of five days.

These insights drove key product and design changes including:

- Identifed a mismatch in product market fit for two gen AI products that resulted in significant strategy shifts.

- Derisked the AI Alliance's strategy by avoiding investment in a proposed project that did not address user needs.

- Increased the likelihood of success for first time users by improving the accessibility of Agent Stack docs, the second most GitHub-starred open source project at IBM.

- Shifted the development of the Granite AI Models to address user priorities.

During my two years with this team, we've launched or improved multiple gen AI products shaped by UX research conducted at light speed. These products include BAM (which became the Red Dot award-winning watsonx.ai) and the BeeAI Framework and Agent Stack (Fast Co Innovation by Design award honerees and now Linux Foundation projects). Peering into an alternate universe where I only used traditional UX research methods, the majority of key decisions across these products would have been made before UX research could deliver insights. I'm proud to have increased the number of evidence-based product and design decisions.

But don't take my word for it! I run a yearly survey on the impact of UX research with my team, and this is what they have to say about UX research at light speed:

- “Samantha designs and runs very agile (and well-structured!) studies that reduced time for insight.” — Design Principal

- “Samantha has a strong process and organization. Her insights are always actionable and relevant.” — Product Manager

- “I like the amount of communication Samantha provides...I know this is a lot of work... but it's helpful to consume learnings...in a digestible way.” — Design Principal

- “Samantha had a huge impact on getting this off the ground and on schedule.” — IBM Fellow

I presented a talk at UXPA Boston based on this article called “Building the plane as it flies through a black hole: revising five UX research practices for generative AI development”.

To see case study of a UX research project I ran at light speed, check out BAM